Xiaomi MiMo Review: 60M Tokens for $6, Faster Than GPT-5.4

I spent two weeks testing Xiaomi MiMo after comparing ChatGPT Go, DeepSeek v4, and OpenCode Go. Here is why MiMo won on price, speed, and multimodal support.

I had a simple rule when looking for my next AI subscription: it has to be cheap. Not affordable. Cheap. That search is what led me to Xiaomi MiMo.

I work as a developer doing both backend coding and UI/UX work. That means I need a model that handles code and images. Every time I looked at the US model pricing, it felt like paying rent for a studio apartment when all I needed was a parking spot.

So I started digging into the alternatives. Chinese AI companies have been shipping fast, pricing aggressively, and honestly delivering real quality. Here is how my research went and why I ended up with Xiaomi MiMo.

The Research: Three Candidates, One Winner

ChatGPT Go: Cheap Price, Weak Limits

ChatGPT Go launched in Indonesia at IDR 75,000 per month. That is roughly $4.60. On paper, it sounds like a steal. But when I looked closer at the limits, something felt off.

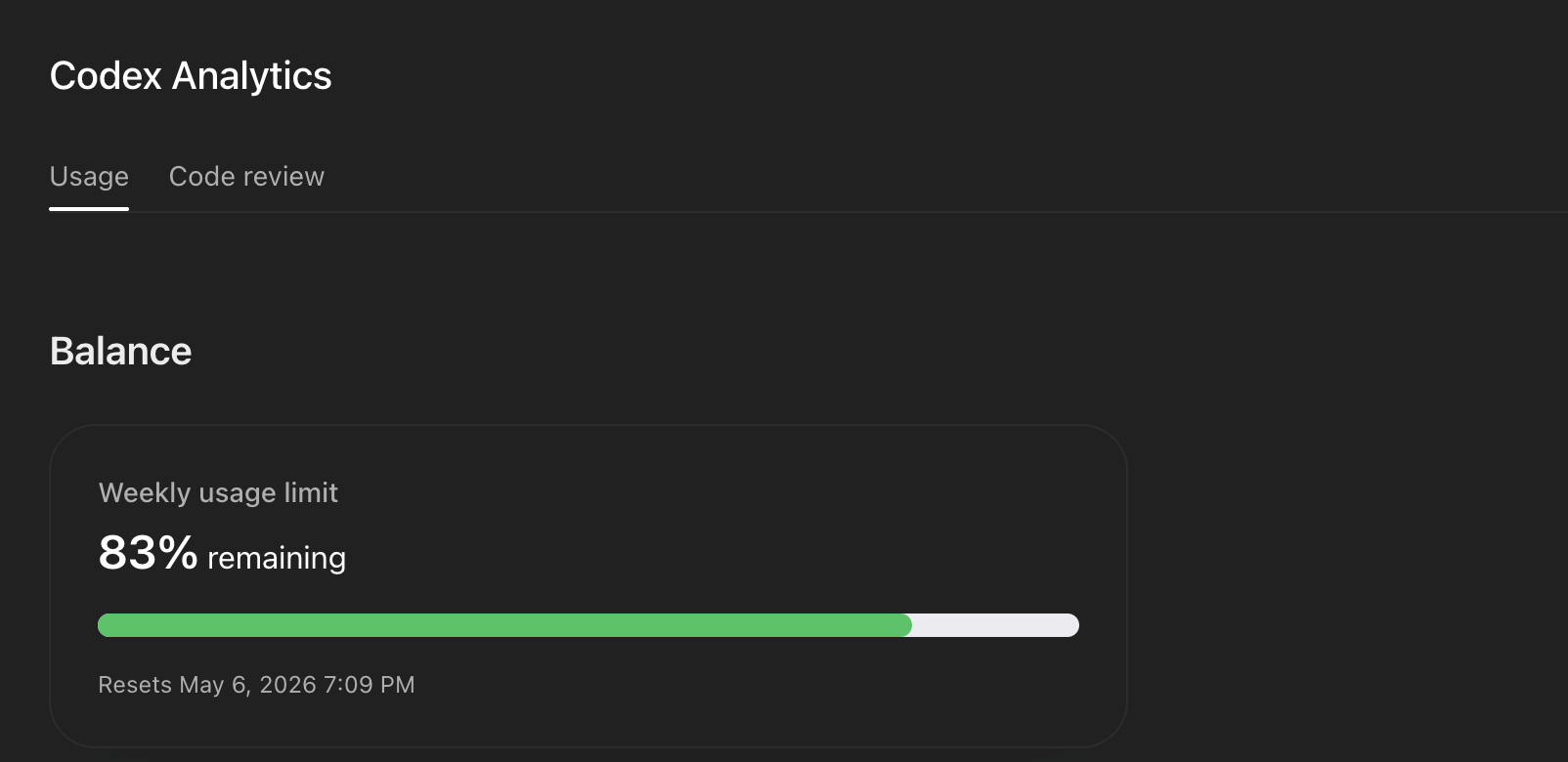

ChatGPT Plus and Pro users get the 5-hour usage window that resets regularly. ChatGPT Go does not get that. Instead, it runs on a weekly usage limit, exactly like the ChatGPT free tier’s Codex limit.

That screenshot says it all. “Weekly usage limit, 83% remaining, Resets May 6.” This is the Codex analytics dashboard. Heavy users will burn through that weekly budget fast. The cheap price makes sense when you realize you are getting a free-tier experience with a paid-tier label. ChatGPT Go was out.

Also Read: OpenCode Multi-Model CLI: Switch AI Without Limits

DeepSeek v4: Text-Only Kills It for UI Work

DeepSeek v4 was my next target. The model is genuinely impressive for code and reasoning. Pricing is extremely competitive. I had it bookmarked for weeks.

Then I checked the multimodal support.

DeepSeek v4 is text-focused. It does not handle image or video input the way I need. When you do UI/UX work, you constantly share screenshots with your AI, ask it to review designs, or feed it reference images for layout decisions. A text-only model cannot do that job.

DeepSeek was eliminated. Great model, wrong fit.

OpenCode Go: Good, But MiMo Beat It on Value

OpenCode Go came in at $5 for the first month and $10 per month after that. The first month price is attractive. But $10/month ongoing is a real ask, especially when you need a lot of tokens and the pricing jumps after month one.

I needed maximum tokens at minimum ongoing cost. Not a discounted trial followed by full price.

When I compared OpenCode Go to Xiaomi MiMo, MiMo came in at $5.28 for the first month and $6 per month after. That is a tighter price gap between the trial and the renewal, and the renewal price stays well under $10.

More importantly, MiMo’s Lite Monthly Plan includes 60 million tokens per month. That number is enormous. I checked it twice.

Why I Chose Xiaomi MiMo

The math was straightforward. MiMo gave me the most tokens per dollar, supported multimodal input, and stayed cheap on renewal. Here is how the Lite Monthly Plan breaks down:

- First month: $5.28

- Ongoing: $6 per month

- Token budget: 60,000,000 credits per month

- Models included: MiMo-V2.5-Pro, MiMo-V2.5, MiMo-V2.5-TTS, MiMo-V2-Pro, and more

- Coding tool compatibility: Claude Code, OpenCode, OpenClaw, KiloCode

That last point matters. MiMo integrates directly with the coding tools I already use. I did not need to change my workflow to try a new model. I pointed OpenCode at MiMo’s API endpoint and started working.

Two Weeks of Testing

The TPS is Genuinely Fast

TPS means tokens per second, the speed at which the model generates output. This is one of the most practical measurements for a daily coding assistant. A slow model makes you wait. A fast model keeps you in flow.

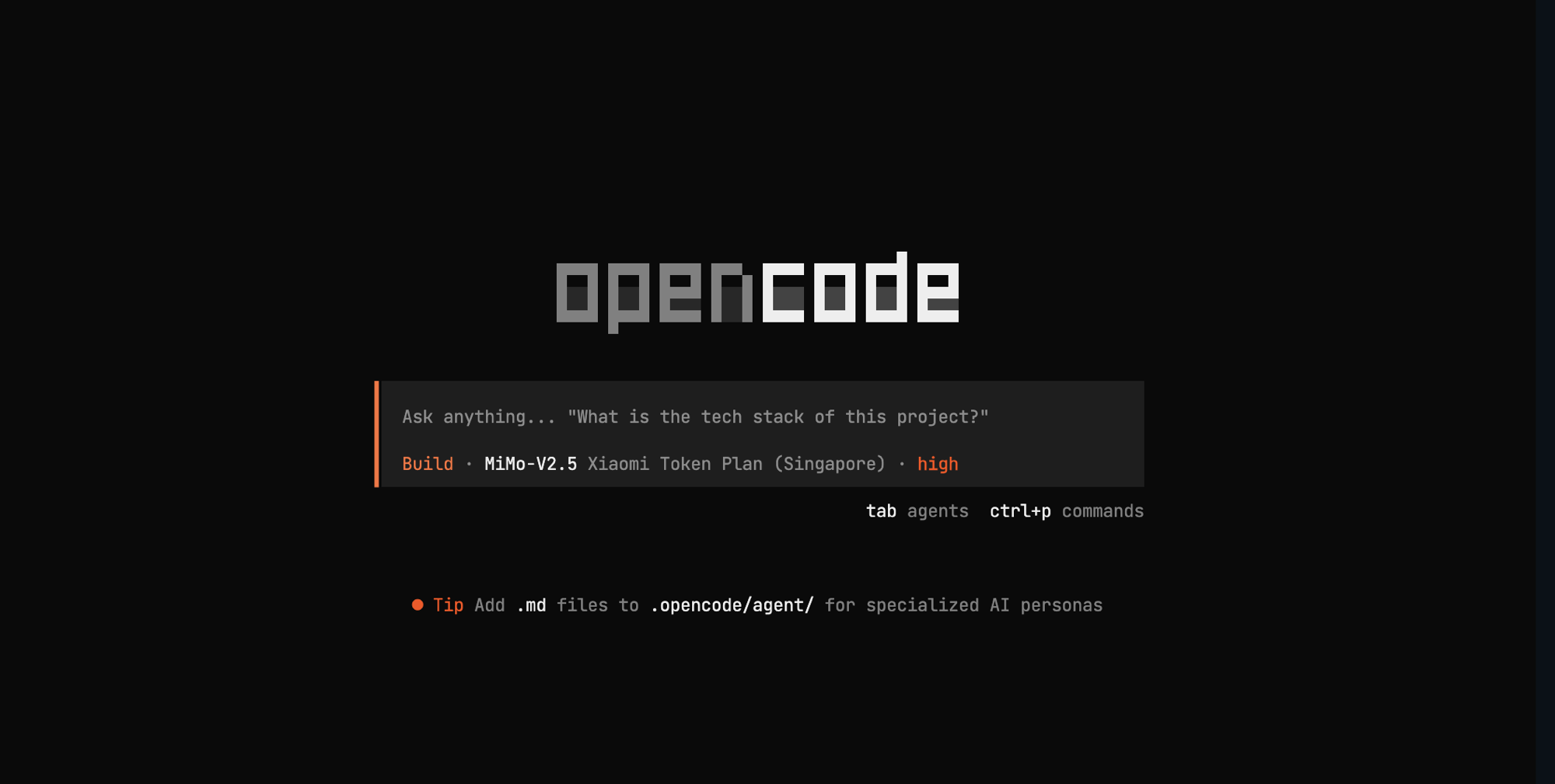

I tested MiMo through OpenCode and the speed was immediately noticeable.

The terminal shows “MiMo-V2.5 Xiaomi Token Plan (Singapore) high.” That “high” indicator reflects the performance mode active during the session. The output speed felt faster than what I had been getting from other models at similar price points. Responses came back quickly even during complex code generation tasks.

Usage Monitoring is Clean

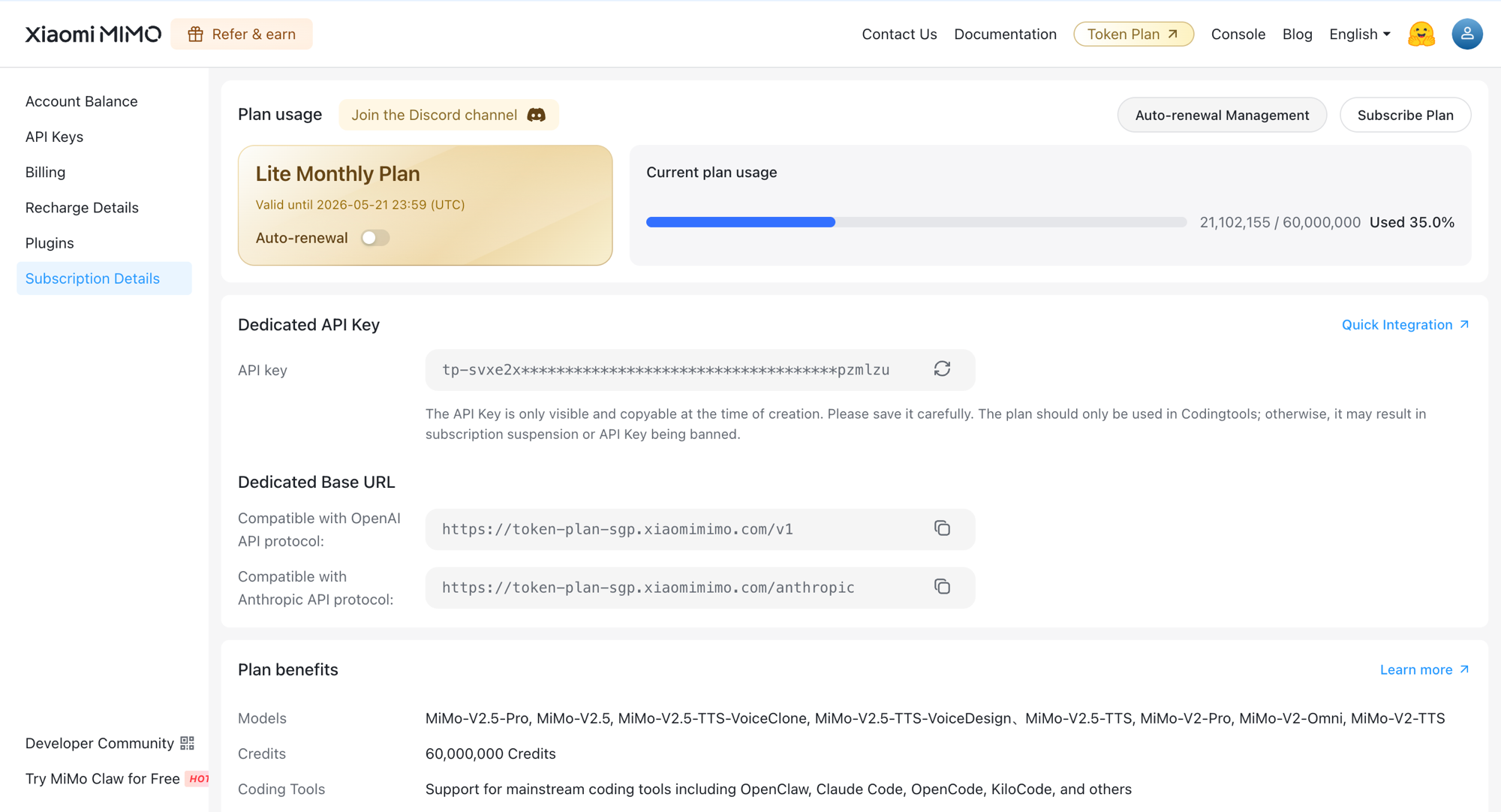

One thing I appreciate about MiMo is the subscription dashboard. It shows your token usage clearly, gives you a progress bar, and shows how much of your monthly budget remains. You know exactly where you stand.

At the time of that screenshot, I had used 21,102,155 out of 60,000,000 tokens. That is 35% used. The plan is valid until May 21, 2026. This kind of visibility is exactly what you want. No surprises, no guessing at usage. It works like the dashboards on Claude Code and ChatGPT Codex, both of which I have used before.

Also Read: Ollama: Run Local AI Models Like Docker

A Lucky Timing on the Upgrade

I subscribed during the MiMo v2 period. About a week later, MiMo v2.5 launched. Normally you might expect the new version to carry your existing token count forward. Instead, the counter reset to zero.

That meant I got a fresh 60 million token budget on the newer, faster model. Pure luck, but I will take it.

MiMo v2.5 vs GPT-5.4 and Sonnet 4.6

After using MiMo v2.5 for nearly two weeks, I ran some informal comparisons against GPT-5.4 and Claude Sonnet 4.6. My impression is that MiMo v2.5 outputs faster than both at their respective price points. Response quality for coding tasks is strong. It handles the work I throw at it without slowing down.

This is not a formal benchmark. This is two weeks of real usage on real projects. The model has not let me down.

Who Should Try MiMo

MiMo makes the most sense if you:

- Do both code and UI/UX work and need multimodal input

- Use OpenCode, Claude Code, or similar CLI coding tools

- Want a high token ceiling without paying $20 per month for it

- Are comfortable with Chinese tech products and their API infrastructure

If your work is purely text or you only need occasional AI assistance, the token budget may be more than you need. But if you push your AI model daily and track token usage like a resource, 60 million per month at $6 is a difficult number to argue with.

How to Get Started

MiMo is available through the Xiaomi MiMo platform. You sign up, choose the Lite Monthly Plan, grab your API key and base URL from the subscription details page, and configure your coding tool to point at MiMo’s endpoint.

The base URL supports both OpenAI and Anthropic API protocols. If your tool already works with one of those formats, switching to MiMo takes about two minutes.

The first month is $5.28. See how 60 million tokens feels before committing to the full $6 renewal.

Final Verdict

ChatGPT Go has attractive pricing but weak limits. DeepSeek v4 is strong on text but cuts out for multimodal work. OpenCode Go is reasonable but the renewal price puts it above MiMo for ongoing use.

MiMo v2.5 is fast, multimodal, deeply integrated with major coding tools, and gives you 60 million tokens per month for $6 after the first month. Two weeks in, I have no regrets about choosing it.

If you have been hunting for the sweet spot between price and capability in the current AI market, MiMo is worth a serious look.